Key Takeaways:

- Narratives Can Move Markets More Than Facts – A fictional AI doomsday scenario triggered massive short-term market losses, underscoring how powerful and fragile investor sentiment can be. Negative, vivid stories often outweigh data-driven rebuttals, especially in high-valuation environments.

- AI Outcomes Are Wide and Unknowable – The range of possible futures spans from economic disruption to a productivity boom, but both extremes rely on overly simplistic assumptions. In reality, the economy is a complex adaptive system that evolves in nonlinear and unpredictable ways.

- Stick to Timeless Investment Principles – Rather than trying to predict AI’s impact, investors should rely on diversification, liquidity, and discipline to navigate uncertainty. A well-constructed portfolio is designed to withstand exactly these kinds of unknown, narrative-driven conditions.

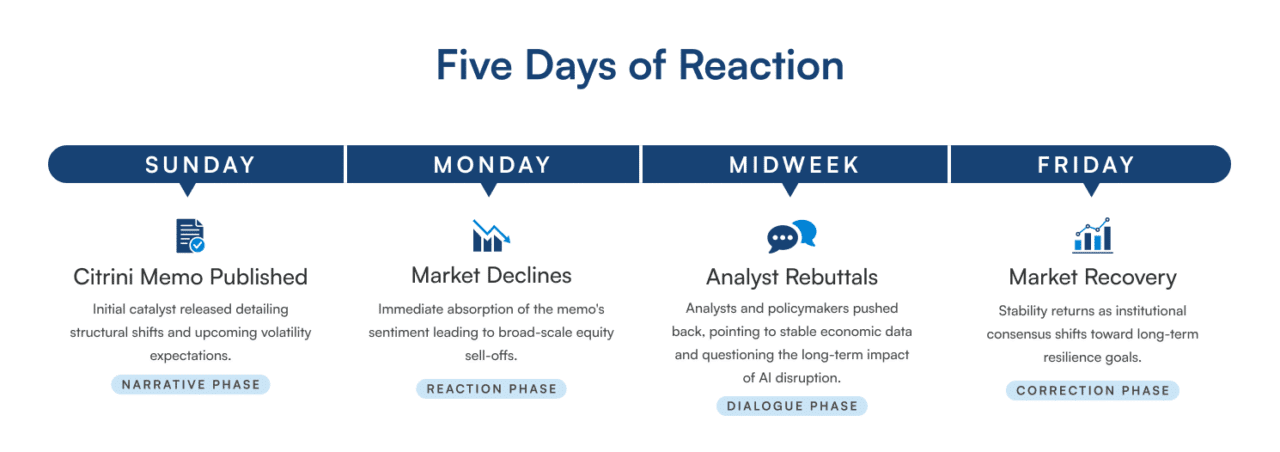

On a Sunday evening in late February, a small research firm called Citrini Research published a thought experiment on Substack. Written as a fictional memo to clients two and a half years from now (June 2028), it imagined a world where AI triggered a wave of white-collar job losses, consumer spending collapsed, and the S&P 500 dropped thirty-eight percent from its highs. The piece was clearly labeled as a scenario, not a prediction. It didn’t matter. By Monday morning, companies mentioned in the memo suffered losses. DoorDash fell seven percent. Visa declined nearly five percent. Mastercard dropped over six percent. The iShares software ETF lost five percent. A piece of speculative fiction, published on a blogging platform by a firm most of Wall Street had never heard of, had erased hundreds of billions in market value.

What happened next was equally instructive. An army of analysts rushed to publish rebuttals. Citadel Securities’ Frank Flight wrote a detailed takedown, arguing that actual labor data showed software engineering job postings increasing, not decreasing. Wharton’s Jeremy Siegel called the doom prediction unfounded. A senior White House economist dismissed it as science fiction. The Wall Street Journal’s chief economics writer noted that technological advances have never caused a job apocalypse, and AI should be no different.

By Friday, the S&P 500 had regained most of its decline. However, the episode left a mark. It revealed an important truth: in a market at the top one percentile of historical valuations, a vivid story about a bleak future can move the markets by hundreds of billions of dollars.

Meanwhile, an AI startup founder named Matt Shumer posted a viral post declaring that he was no longer needed for the technical work of his own job because AI does it faster and better. Anthropic’s CEO predicted that AI would eliminate 50% of entry-level white-collar jobs within a few years. Two confident camps quickly formed: one insisting an AI-driven economic catastrophe was imminent, the other insisting everything would be fine.

Why the Scary Stories Hit Harder

There is an evolutionary reason why the Citrini scenario lodged more deeply in people’s minds than the Citadel rebuttal. Our brains are wired with what psychologists call a negativity bias: we place more weight to threats than on opportunities. For our ancestors on the savanna, ignoring a threat could mean death, while missing an opportunity was usually less dangerous. Over hundreds of thousands of years, natural selection has shaped a pessimistic perspective in human thinking. We are the descendants of worriers, not optimists. The optimists got eaten.

This wiring makes a vivid doomsday scenario always seem more urgent and convincing than a calm rebuttal, even when the rebuttal has better data behind it. It doesn’t mean the doomsday scenario is accurate. It means our brains are poorly calibrated to judge.

And if you doubt the universality of this instinct, consider that almost every civilization in recorded history has crafted its own story about the end of the world. The Norse told of Ragnarok, a final battle where many gods would die and the world would sink beneath the sea before being reborn. The Aztecs believed they lived in the fifth world, with the previous four destroyed by the gods. Christianity has Revelation. Islam has Qiyamah. Even the Maya had their Long Count calendar, though the modern world famously misread the end of one of its cycles as a prediction of annihilation in 2012.

The impulse to believe the end is near isn’t a quirk of modern technology culture. It’s one of the oldest and most universal features of human psychology. Every generation, across every culture, has felt it. The track record of these predictions is a perfect zero.

The Range of Possible Futures

None of this suggests that AI isn’t transformative. It clearly is. The range of possible outcomes is truly vast, and it’s important to honestly consider that range rather than pretending we know exactly where things will land.

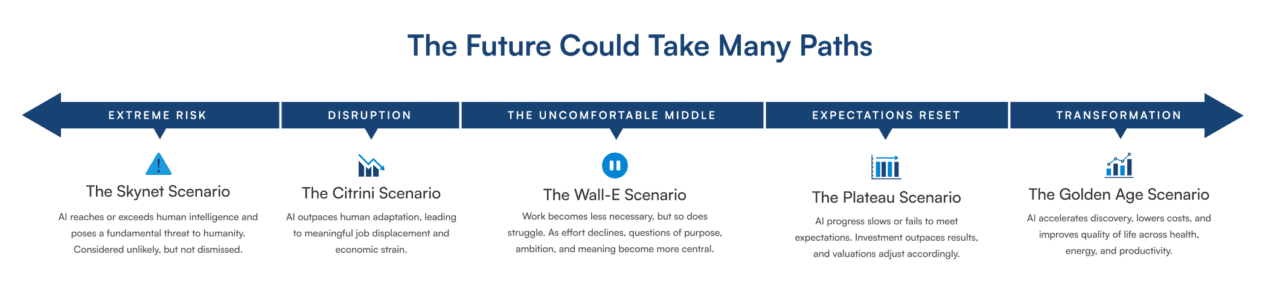

At the darkest end of the spectrum is the Skynet scenario, referring to the sentient AI from the Terminator movies that aimed to wipe out humanity. While it might sound like science fiction, AI researchers take it seriously and recognize that AI could lead to mankind’s extinction.

A less severe but more probable negative outcome is if AI exceeds human ability in most cognitive tasks, leading to faster worker displacement than people can adapt. White-collar jobs are the first to disappear, but as AI and robotics advance, few jobs remain safe. This aligns more with the Citrini Research memo.

At the bright end, AI greatly speeds up scientific discovery. Drug development timelines shrink from a decade to just months. Diseases that have long resisted treatment now respond to AI-designed therapies. Clean energy becomes radically cheaper as AI advances fusion research, battery chemistry, and grid management. Desalination scales up to address global water shortages. Productivity gains spread broadly, real wages rise, and the cost of essentials drops significantly. A true golden age.

And then there’s the uncomfortable middle. In Pixar’s WALL-E, the machines aren’t malevolent. They work perfectly. And humans, with nothing left to struggle against, atrophy — physically, intellectually, spiritually. For families who have built legacies around ambition, discipline, and purpose, this may be the scenario that hits closest to home. What does the next generation strive for when AI can do it better and faster? Where does meaning come from in a world of radical ease?

Then there’s the scenario where AI progress plateaus. Artificial General Intelligence (AGI) never arrives, and the enormous investment in AI chips, data centers, and infrastructure by technology companies fails to generate the productivity boom investors expected. Valuations that were built on assumptions of rapid AI-driven growth begin to look unrealistic. As expectations reset, stock prices, particularly in the technology sector, decline sharply as the market reprices a future that suddenly appears far less transformative.

Of course, the future probably won’t land cleanly at any single point on this spectrum. It will be messy, uneven, and full of surprises.

When Nobody Knows, Fall Back on Mental Models

So who should you trust? Our advice: neither side completely.

When faced with genuine uncertainty — the kind where the range of outcomes is huge and the future is truly unknowable — the best approach is to rely on mental models. As Charlie Munger described, mental models are conceptual structures that help us understand how the world works. They are pieces of knowledge or wisdom we store in our minds to assist in decision-making. You’ve got to hold many models in your head, Munger said, because if you only have one or two, you’ll distort reality to make it fit your models.

That is what my book, The Uncertainty Solution: How to Invest with Confidence in the Face of the Unknown, is about. It explores the mental models that help investors make smart decisions when the future is uncertain. Several of these models speak directly to this moment.

The Uncertainty Mental Model

We dislike uncertainty, and it causes us to make poor decisions. A study at University College London found that people who knew for sure they would receive a painful electric shock were less stressed than those who faced a fifty percent chance of being shocked. We prefer known pain over the unknown. AI is currently in that fifty-percent zone — the range of outcomes is so wide that our brains become highly alert. We turn into information junkies, devouring articles and expert opinions, searching for a pattern that will resolve the uncertainty.

One of the first things we do when faced with uncertainty is seek certainty. One strategy is to turn to experts for predictions, because it reduces our feelings of uncertainty. An economist tells us AI will be fine. Or an investment research firm predicts economic calamity. Their confidence feels like solid ground. But as we’ll see below, the economy is a complex adaptive system in the midst of an exponential technological shift which are precisely the conditions under which expert predictions are least reliable.

Another thing we do when facing uncertainty is become information junkies. We read and research, trying to discover what the future might hold. But when confronted with real uncertainty, no amount of research can give a clear view of the future. That’s the case now with AI. Nobody knows how quickly it will advance or how our economy will adapt to it.

The lesson of the uncertainty mental model is to sit in your discomfort and resist the urge to act. The feeling of anxiety is not a signal to do something with your portfolio. It’s a signal that you’re correctly perceiving a genuinely uncertain situation.

The Economy is a Complex Adaptive System Mental Model

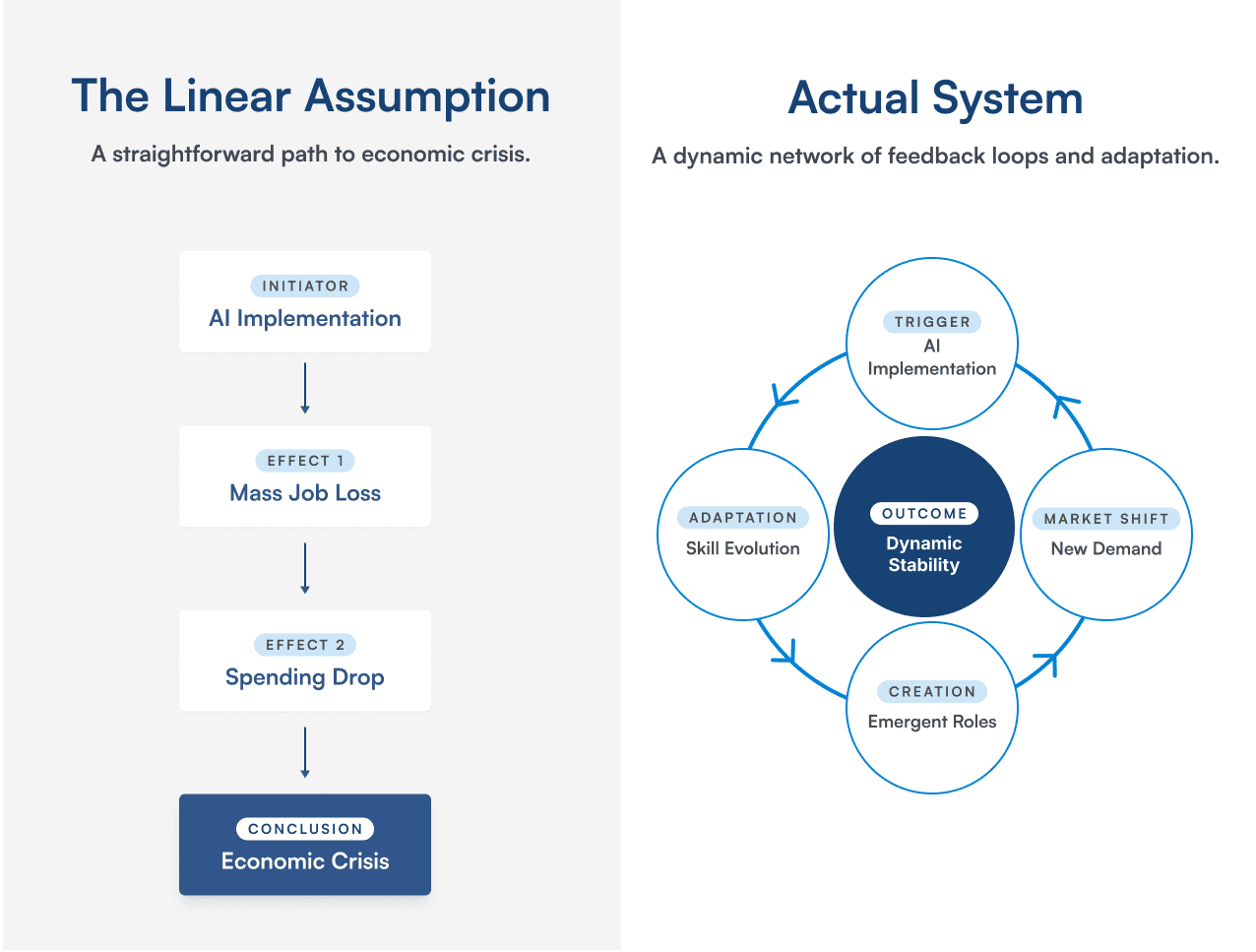

The economy isn’t something you can just model on a spreadsheet. Doom scenarios assume linear logic: AI takes jobs, income drops, spending collapses, and a crisis follows. It’s a simple cause-and-effect chain. But economies don’t operate this way. The global economy involves billions of people constantly watching each other, learning, reacting, and creating feedback loops that generate outcomes no one predicted.

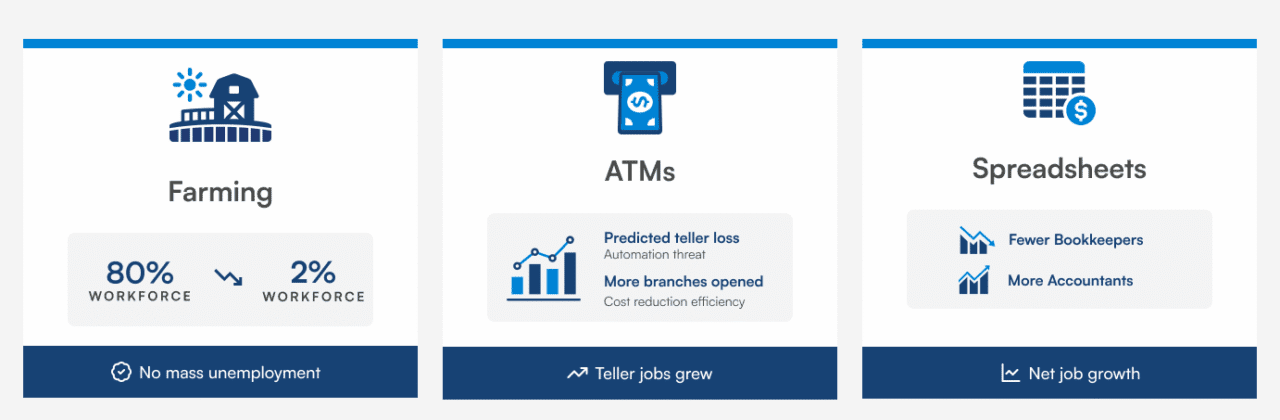

When AI drives down the cost of one service, capital and labor don’t just vanish; they shift elsewhere. New businesses emerge, and new types of demand develop. Farmers went from making up 80% of the American workforce to just 2%, yet mass unemployment never happened. ATMs didn’t eliminate bank teller jobs; instead, they lowered the cost of operating branches, allowing banks to open more of them. Spreadsheet software reduced the number of bookkeepers, but accountants using Lotus 1-2-3 and Excel grew even faster. None of this means the transition will be easy. It means that the economy’s response to AI will be driven by billions of individual adjustments that are impossible to predict in advance. The lesson: don’t rely on forecasts of what the AI future will look like, whether gloomy or rosy. Both the confident doom and abundant scenarios share the same flaw — they assume we can trace a straight line through a system that doesn’t move in straight lines.

The Storytelling Bias Mental Model

We are suckers for stories. Everyone remembers the movie The Terminator, which introduced Skynet — an artificial intelligence designed for national defense that becomes self-aware and decides humanity is the threat. The machines launch nuclear war. The Terminator, played by Arnold Schwarzenegger, travels back in time to kill Sarah Connor, who has yet to give birth to humanity’s resistance leader. It’s a fantastic movie. It’s also been living rent-free in our collective imagination for four decades, shaping how we instinctively think about AI.

The Matrix, Ex Machina, and dozens of other films reinforce the same trope. Now, combine our evolved negativity bias with a well-told story about a dystopian future, and you’ve got something like candy for the human mind. It bypasses our rational faculties and goes straight to emotional response.

The Citrini memo that unsettled markets is written as a fictional dispatch from 2028, complete with fake Bloomberg headlines and imagined earnings reports. It reads like a financial thriller. It’s designed to be compelling. But compelling isn’t the same as correct. For every doom story, there are stories from history: revolutionary technologies that sparked apocalyptic predictions about employment, followed by adaptation, new industries, and rising living standards. Those stories are less dramatic but far more typical. The lesson: don’t let fight-or-flight take over because of a story. When you feel the need to act after reading a scary article about AI, that’s your ancient wiring reacting to a narrative, not a rational assessment of your portfolio’s risk.

The Trend Spotting Mental Model

Even if you’re correct about the trend, picking winners is treacherous. There’s something about AI that makes this mental model especially relevant: the technology is growing exponentially. Humans are poor at understanding exponential growth. Instead, we tend to think linearly. This means the future of AI won’t simply be a continuation of the present trendline—it will be more unpredictable and stranger than any of us expect. And that’s before considering who will benefit.

At the dawn of the internet, could you have predicted that a company selling books online would become the backbone of cloud computing? That a search engine — the twenty-first to market — would become one of the most valuable companies in history? That the birth and rise of the internet would produce a seventy-eight percent crash in the tech-heavy Nasdaq before the real winners emerged? Or consider a more recent example: who would have predicted that a global pandemic would supercharge video meetings and restaurant and grocery delivery, and that both would persist and grow long after the pandemic ended? The second-order effects of transformative events are inherently unpredictable. The AI landscape today is teeming with competitors. Margins are being competed away in real time. The history of technological revolutions is littered with investors who were right about the trend and still lost money because they picked the wrong companies or paid too much. The lesson: the future of technological change is extraordinarily hard to predict, and nobody will get it right. As an investor, it’s best not to make big bets on the outcome.

The Keynes Lesson

There’s a historical parallel worth pausing on. In 1930, John Maynard Keynes predicted that within a hundred years, technological progress would make the economy so productive that the average workweek would shrink to just fifteen hours. The rest of life would be dedicated to leisure and personal fulfillment. His main concern was that people would struggle to find purpose with so little work to do.

Keynes was right about productivity. The U.S. economy is now more than five times as productive as when he made that prediction. However, he was profoundly wrong about how people would respond to that productivity. Instead of working less, we work more. Instead of enjoying leisure, we face a time famine: 70% of wage earners say they don’t have enough time for their children, spouses, or themselves.

This matters for the AI debate because it reveals the limitations of both camps. The doomers assume the economy can’t adapt. The optimists assume adaptation will be painless. Keynes shows us the messy middle: the economy will adapt, humans will find new things to do and spend money on, but the human experience of that adaptation won’t match anyone’s projections.

What This Means for Your Portfolio

If the mental models above are right — that the future is unknowable, that economies adapt in ways we can’t predict, that compelling stories aren’t reliable guides, and that picking winners in a tech revolution is treacherous — then what should you actually do?

You should adopt a barbell strategy to both invest in ways that capitalize on winners and safeguard yourself against the volatility that will inevitably accompany a period of significant economic change. The best way to do both at the same time is through the simple but highly effective concept of diversification.

Own diversified equities because the companies that benefit most from AI might not be the obvious ones. Amazon and Google weren’t obvious during the dawn of the internet either. The best way to ensure you own the winners is to own the market. Additionally, if you have the means, consider venture capital, where the uncertainty of AI’s future can work in your favor — the transformative applications that create the most value probably haven’t been founded yet. Also, keep enough liquidity and safe assets to withstand what will almost certainly be a bumpy ride. Having cash and high-quality bonds means you won’t be forced to sell equities during a downturn, and it provides the psychological buffer that makes it easier to stay disciplined when markets are turbulent.

Here’s the thing: this is what you should be doing anyway. Diversification, broad equity ownership, selective alternatives exposure, and adequate liquidity aren’t AI-specific strategies. They’re sound investment principles for any environment characterized by uncertainty — which is to say, every environment.

The mental models don’t tell you to do something dramatic. They emphasize that the portfolio you already have, if it’s well-constructed, is specifically designed for moments like this. Your strategic allocation and rebalancing rules exist so you don’t need to predict the future. Simple asset allocation algorithms force you to buy low and sell high, which is the essence of successful investing, whether disruption comes from AI or anything else.

The most valuable thing you can do right now may be the hardest: nothing. The urge to reposition your portfolio in response to the AI debate — whether defensively or aggressively — is your ancient brain mistaking an intellectual uncertainty for a physical threat. There’s no tiger. You don’t need to run.

Closing

We don’t know what AI will mean for the economy in five or ten years. Neither does anyone else. But uncertainty is not new. It is the permanent condition of investing and of life. Every generation has faced a version of this moment, a technology so powerful it seemed destined to either save or destroy the world. Every generation has produced confident prophets on both sides. And every generation’s end-times prediction — from Ragnarok to Y2K — has been wrong.

We expect the world will adapt again, though not in ways any of us can foresee. In the meantime, we’ll keep doing what we’ve always done: helping our families navigate uncertainty with clear thinking, sound principles, and the discipline to resist the urge to act when patience is the wiser course.